Stop Identity Fraud. Trust the Right Customers.

How It Works

What You Usually See

Basic applicant details: name, email, phone. No obvious issues — but also no context. No intent signal. No behavioral layer.

Signal Ingestion

Heka analyzes 30+ real-time web signals — reputation, address discrepancies, behavioral anomalies, alias cycling, and more.

Explainable Structuring

Signals are correlated, risk tags are generated, and a trust score is assigned — with full transparency.

Actionable, Explainable Output

A decision‑ready fraud risk score (0–100), built from 20+ verified fraud signals that reflect the true risk level of a digital persona.

We return only what you need — clear, evidence-based results, with links to source.

Powered by

Heka’s Identity Intelligence Engine

sources

93%

2.7 x more

$5K+

67%

Frequently Asked Questions

Is Heka GDPR-compliant?

Yes. Heka only processes publicly available information and operates under the legal basis of legitimate interest. We do not collect private, login-gated, or credentialed data.

Where is Heka’s data stored?

All data is stored securely on cloud servers hosted by AWS in the EU (Ireland), with full encryption at rest and in transit.

Does Heka use AI or machine learning models?

Yes - but always with transparency. Heka’s AI transforms noisy web signals into structured, explainable outputs with traceable sources. No black boxes.

How does Heka ensure data accuracy?

Signals are cross-referenced, filtered, and scored by our system to reduce false positives and surface verifiable insights. Clients receive source links and contextual evidence for every insight, ensuring auditability and trust.

What kind of data does Heka collect and process?

Heka processes publicly available, non-credentialed information from the open web. We do not collect or store personal data. Our system structures and analyzes signals from public sources to deliver risk-relevant insights

Explore More Resources

Button Text

The Modern Fraud Stack: How Decisions Actually Get Made (and Where They Break)

An enterprise-grade fraud stack is not a product. It is a latency-constrained decisioning system in which multiple layers – data collection, identity validation, enrichment, scoring, and decisioning – operate as a single flow. In most transaction environments, that entire loop runs in under 300 milliseconds for transaction decisions, and only marginally longer for onboarding.

The challenge is not assembling the stack. Most institutions already have the core components in place, often across multiple vendors and internal systems. The challenge is understanding how those components interact in practice – and where the system produces decisions that appear well-supported, but are not.

How Fraud Decisions Are Produced

A fraud decision is not generated by a single model or rule. It is the result of a sequence of stages, each contributing a different type of signal or constraint.

At a high level, the system collects observable signals, validates identity claims, enriches those signals with external data, applies probabilistic scoring, enforces deterministic rules, and aggregates all outputs into a final decision. Cases that fall outside clear thresholds are escalated, and outcomes are fed back into the system to continuously refine performance.

This flow is consistent across financial institutions, even where implementation details differ . What varies is the relative strength of each layer, and the degree to which each one contributes meaningful signal to the final decision.

The 8 Layers of the Fraud Stack

In practice, this decisioning flow can be broken down into eight functional layers:

1. Signal Collection

The system captures all observable inputs at the point of interaction, including device fingerprinting, IP intelligence, behavioral biometrics, and identity data. These signals form the raw input for all downstream analysis.

2. Identity Verification (IDV)

Identity attributes are validated against trusted sources such as credit bureau headers, SSA records, and sanctions lists. This establishes whether the identity exists and meets regulatory requirements.

3. Data Enrichment

External data sources are used to expand the identity profile. This includes email intelligence, phone intelligence, address validation, and consortium-based signals that provide additional context beyond the initial claim.

4. Risk Scoring

Machine learning models transform raw and enriched signals into probabilistic risk scores. These models typically target specific fraud types, including application fraud, synthetic identity fraud, and account takeover.

5. Rules Engine

Deterministic rules enforce policy and known fraud patterns. These include hard blocks (e.g., sanctions matches), velocity thresholds, and mismatch conditions that cannot be fully captured by models.

6. Orchestration & Decisioning

All signals, model outputs, and rule evaluations are aggregated into a final decision – approve, review, or decline – through a centralized decisioning layer.

7. Step-Up & Case Management

Cases that fall into intermediate risk bands are escalated through additional verification (e.g., biometric checks, OTP) or routed to human investigation workflows.

8. Feedback & Model Governance

Confirmed fraud outcomes, false positives, and analyst decisions are fed back into the system to retrain models, refine rules, and monitor performance over time.

This architecture is broadly consistent across the industry. The presence of these layers, however, does not guarantee effective decisioning.

A Practical View of Where Each Layer Contributes (and Where It Breaks)

The following simplified view highlights how each layer contributes to the final decision, and where its limitations typically emerge:

This view is intentionally reductive. Its purpose is not to describe the system exhaustively, but to make visible where signal strength and decision confidence can diverge.

Where Modern Fraud Stacks Fail

Failures rarely occur because a layer is absent. They occur when a layer produces an output that appears sufficient, but lacks underlying depth.

An identity may pass bureau and SSA validation, present no device or velocity risk, and return acceptable enrichment signals. Yet the identity may still lack coherence across time – no consistent footprint, no reinforcing signals, and no evidence of persistence.

This is the central gap.

Most stacks are effective at confirming that an identity exists. Many can confirm that a user is physically present. Far fewer can determine whether the identity behaves like a reliable individual over time.

Structural Drivers of These Gaps

These limitations are not purely technical. They are structural.

Latency constraints limit the ability to incorporate deeper or slower data sources. Scale requires reliance on generalized models rather than case-specific analysis. Cost and conversion pressures reduce tolerance for additional friction or enrichment calls.

As a result, systems tend to emphasize:

- structural validation (existence)

- reactive signals (prior exposure)

Both are necessary. Neither is sufficient to fully resolve identity risk.

Why Fraud Stacks Differ in Practice

The “perfect” fraud stack is a myth. In practice, every stack reflects a set of trade-offs – between latency, cost, scale, and risk tolerance. Different institutions prioritize different parts of the system:

From Architecture to Evaluation

Understanding the structure of a fraud stack is necessary, but not sufficient. The more important task is evaluating how the stack behaves under real conditions.

Key questions include:

- Which layers are driving final decisions?

- Where is the system relying on structural validation alone?

- Which signals appear present, but are not materially influencing outcomes?

Fraud does not typically exploit missing components. It exploits the assumptions created by partial signal coverage.

Next: Evaluating the Stack in Practice

This report provides a structural view of the modern fraud stack. In the accompanying evaluation guide, we extend this framework to:

- assess the relative strength of each layer

- identify signal gaps and over-dependencies

- map vendor capabilities across the stack

- and isolate the conditions under which structurally valid identities continue to pass controls

Follow us to be notified when the full evaluation guide is released.

The Identity Pivot: Why 2026 is the Year We Stop Fighting AI with AI

The digital trust ecosystem has reached a breaking point. For the last decade, the industry’s defense strategy was built on a simple premise: detecting anomalies in a sea of legitimate behavior. But as we enter 2026, the mechanics of fraud have fundamentally inverted.

With global scam losses crossing $1 trillion and deepfake attacks surging by 3,000%, the line between the authentic and the synthetic has been erased. We are now witnessing the birth of "autonomous fraud" – a landscape where barriers to entry have vanished, and the guardrails are gone.

At Heka, we believe we have reached a critical pivot point. The industry must move beyond the futile arms race of trying to outpace generative models by simply using AI to detect AI. The new objective for heads of fraud and risk leaders is not just detecting attacks; it is verifying life.

Here is how the landscape is shifting in 2026, and why "context" is the only defense left that scales.

The Industrialization of Deception

The most dangerous shift in 2026 is the democratization of high-end attack vectors. What was once the domain of sophisticated syndicates is now accessible to anyone with an internet connection.

This "Fraud as a Service" economy has lowered barriers to entry so drastically that 34% of consumers now report seeing offers to participate in fraud online – an alarmingly steep 89% year-over-year increase.

But the true threat lies in automation. We are witnessing the rise of the "Industrial Smishing Complex." According to insights from the Secret Service, we are seeing SIM farms capable of sending 30 million messages per minute – enough to text every American in under 12 minutes.

This is not just spam; it is a volume game powered by AI agents that never sleep. In the "Pig Butchering 2.0" model, automated scam centers are replacing human labor with AI systems that handle the "hook and line" conversations entirely autonomously. When a single bad actor can launch millions of attacks from a one-bedroom apartment, volume becomes a weapon that overwhelms traditional defenses.

The Rise of the "Shapeshifter" and "Dust" Attacks

Traditional fraud prevention relies on identifying outliers – high-value transactions or unusual behaviors. In 2026, fraudsters have inverted this logic using two distinct strategies:

1. The Shapeshifting Agent

Static rules fail against dynamic adversaries. We are now facing "shapeshifting" AI agents that do not follow pre-defined malware scripts. Instead, these agents learn from friction in real-time. If a transaction is declined, the AI adjusts its tactics instantly, using the rejection data to "shapeshift" into a new attack vector. As noted by risk experts, these agents autonomously navigate trial-and-error loops, rendering static rules useless.

2. "Dust" Trails and Horizontal Attacks

While banks watch for the "big heist," fraud rings are executing "horizontal attacks." By skimming small amounts – often around $50 – from thousands of victims simultaneously, attackers create "dust trails" that stay below the investigation thresholds of major institutions.

Data from Sardine.AI indicates that fraud rings are now using fully autonomous systems to execute these attacks across hundreds of merchants simultaneously. Viewed in isolation, a single $50 charge looks like a normal transaction. It is only when viewed through the lens of web intelligence –seeing the shared infrastructure across the wider web – that the attack becomes visible.

The "Back to Branch" Regression

Perhaps the most alarming trend in 2026 is the erosion of confidence in digital channels. Because AI-generated identities and deepfakes have reached such sophistication, 75% of financial institutions admit their verification technology now produces inconsistent results.

This failure has triggered a defensive regression: the return to physical branches. Gartner estimates that 30% of enterprises no longer trust biometrics alone, leading some banks to demand customers appear in person for identity proofing.

While this stops the immediate bleeding, it is a strategic failure. Forcing customers back to the branch introduces massive friction without solving the core problem. As industry experts note, if a teller reviews a driver's license "as if it's 1995" while facing a fraudster with perfect AI-generated documentation, we are merely adding inconvenience, not security.

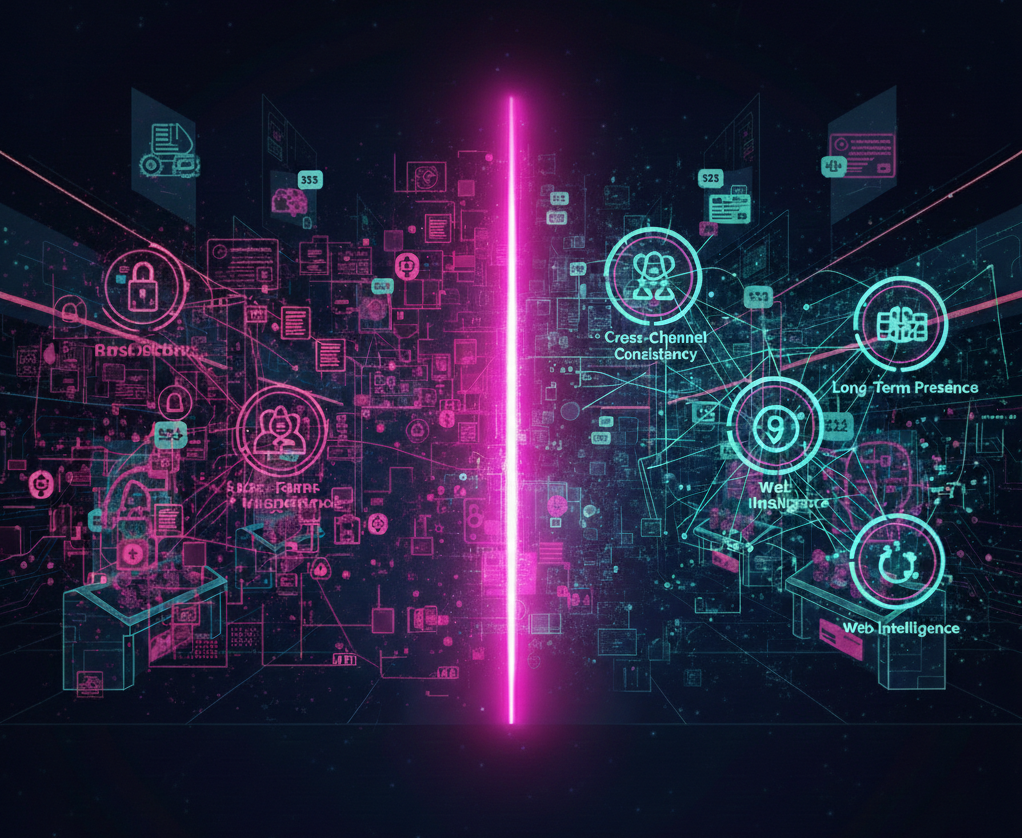

The Solution: Context is the New Identity

The issue facing our industry is not a failure of digital identity itself; it is a failure of context.

Trust is fragile when it relies on a single signal, like a document scan or a selfie. In an AI-versus-AI world, seeing is no longer believing. However, while AI can fabricate a driver's license or a video feed, it consistently fails to recreate the messy, organic digital footprint of a real human being.

To survive the 2026 threat landscape, organizations must pivot toward:

1. Web Intelligence: Linking signals together to see the wider web of interactions rather than isolated events.

2. Long-Term, Consistent Presence: analyzing the continuity of an identity over time. Real humans have history. Synthetic identities, no matter how polished, lack the depth of a long-term digital existence.

3. Cross-Channel Consistency: Looking for the shared infrastructure and overlapping identities that horizontal attacks inevitably leave behind.

The 2026 Takeaway

The future offers a clear path forward. Fraud prevention is no longer about beating a single control – it is about bridging the gaps between them.

While identity and behavior are easier to fake in isolation, the real advantage lies in the complexity of real-world signals. These are the signals that remain expensive to manufacture at scale. Organizations that embrace this context-driven approach will do more than just stop the $1 trillion wave of autonomous fraud; they will unlock a seamless experience where trust is automatic.

Stay informed. Stay adaptive. Stay ahead.

At Heka Global, our platform delivers real-time, explainable intelligence from thousands of global data sources to help fraud teams spot non-human patterns, identity inconsistencies, and early lifecycle divergence long before losses occur.

.png)

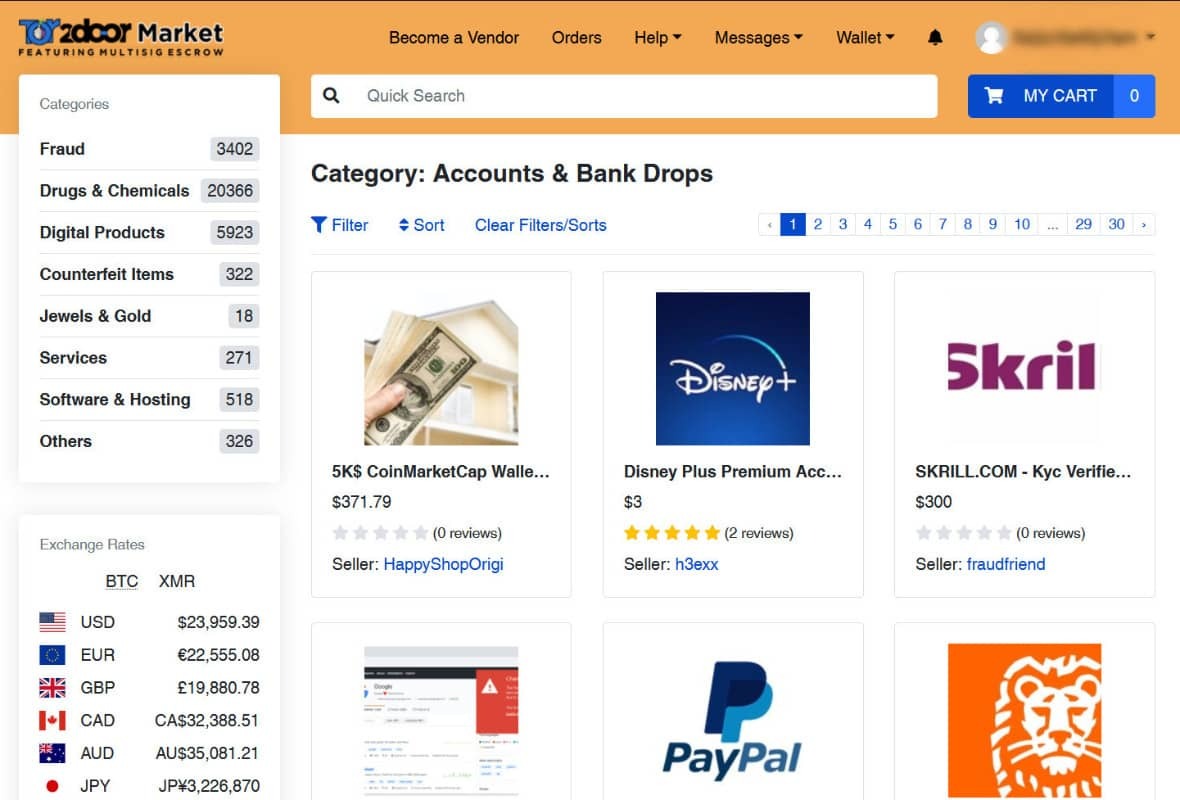

Fraud-as-a-Service: Inside the Industrial Economy Reinventing Digital Crime

Fraud is no longer a technical skill. It’s a shopping experience.

What used to require specialized knowledge, custom scripting, and underground connections is now available through polished marketplaces that look indistinguishable from mainstream e-commerce platforms. Scrollable product cards. Star ratings. Tiered subscriptions. “Customers also bought…” recommendations.

Fraud-as-a-Service (FaaS) is not just an ecosystem – it is a parallel economy, built on the same principles as Amazon, Fiverr, and Shopify, but optimized for identity crime.

The result is a dramatic shift in the threat landscape: lower entry barriers, lower operational costs, and attacks that scale instantly. Fraud is no longer limited by human capability – it is limited only by how quickly these marketplaces can generate new products.

This blog exposes how the FaaS ecosystem actually works, what is available inside these marketplaces, and why the industrialization of fraud is reshaping digital risk.

Modern identity fraud now operates like a consumer marketplace

The biggest misconception about digital crime is that it is messy, unstructured, and technically demanding. The truth is the opposite.

Today’s fraud marketplaces offer:

- User accounts with dashboards, order history, customer tickets

- Subscription plans (“Basic,” “Pro,” “Enterprise”)

- Tiered pricing by volume, geography, and document type

- Built-in automation (bots, scripts, testing tools)

- 24/7 support via Telegram or live chat

- Refund guarantees for non-working identities or scripts

- Tutorials & onboarding with step-by-step videos

The experience mirrors legitimate SaaS:

- “Upload your target list here.”

- “Select your document pack.”

- “Choose your delivery format (PNG, PDF, MP4 liveness).”

- “Add to cart → Check out with crypto → Instant delivery.”

And like Fiverr, each vendor specializes. There are providers for:

- Latin American passports

- US tax records

- UK banking profiles

- SIM provisioning

- Credit card dumps segmented by BIN and issuer

- Bots tailored specifically for major IDV vendors

Fraud hasn’t just scaled – it has industrialized.

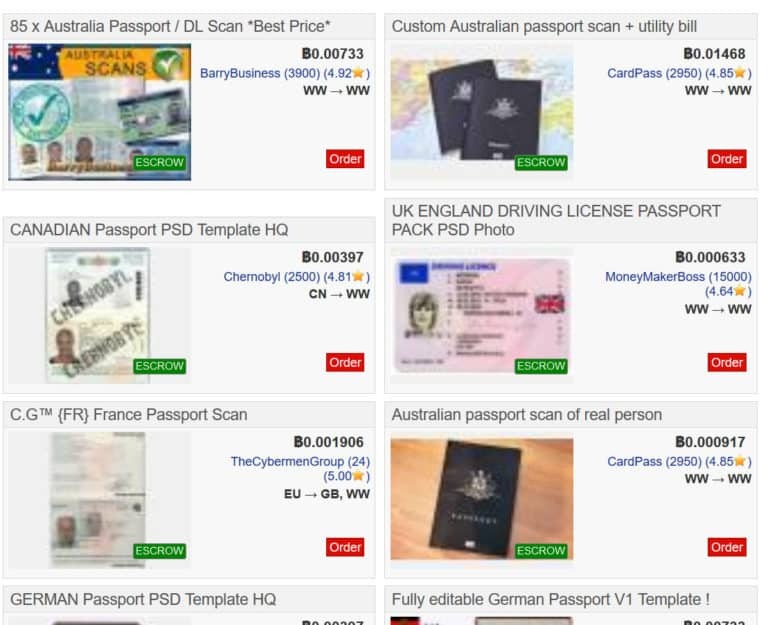

What is actually available: A catalog of the modern fraud economy

This is the part most institutions underestimate. The breadth and maturity of offerings is staggering. Here is what is openly sold across FaaS platforms – with the same clarity you’d expect from Amazon.

A. Synthetic Identity Kits

Full synthetic personas sold as complete packages:

- Name, DOB, SSN fragments, address history

- AI-generated headshots with multiple angles

- Pre-built social media history

- “Proof of life” selfies for liveness checks

- Steady digital footprint entropy (posts, likes, connections)

- Companion documents (W-2s, pay stubs, utility bills)

Vendors guarantee the profile will pass KYC at specific institutions.

And the price range? $25–$200 per profile.

B. Document Forgery Packs

These aren’t crude Photoshopped IDs. They include:

- High-resolution PSD templates for global passports and licenses

- Embedded barcodes, holograms, MRZ zones

- Configurable fields auto-filled via AI

- Companion video packs for selfie + document flow (“blink & tilt liveness”)

Some vendors offer automated generation APIs: “Generate 1,000 EU passports → Deliver in 40 seconds.”

C. Phishing Kits

Pre-built phishing engines with:

- Domain spoofing

- Hosting included

- Real-time dashboard showing captured credentials

- Auto-forwarded MFA codes

- Scripted call-center dialogue for social engineering ops

Price: $10–$50 per campaign, often with free updates.

Many platforms now include "Fraud-GPT” engines – fraud-tuned GenAI models capable of producing tailored scam messages, emotional manipulation scripts, romance-fraud personas, and real-time social-engineering dialog. These systems can hold multi-turn conversations with victims while dynamically adjusting tone, urgency, and narrative to increase conversion rates.

D. Botnets & Automation Engines

Not just credential stuffing – full operational bots:

- Session replay

- Checkout automation

- Device emulation

- Behavioral mimicry (typing cadence, cursor drift, hesitation modeling)

- “IDV bypass bots” trained on top vendors’ workflows

These bots now learn from failure and retry with adjusted parameters.

E. Account Takeover Kits

Just add username and phone number. These bundles include:

- OTP interception

- SIM swap partners

- Credential validation bots

- Reset-flow bypass templates

- Email change scripts

They are marketed explicitly: “ATO at scale. 94% success rate on XYZ bank. Guaranteed replacement if blocked.”

F. Credit Card & PII Marketplaces

Highly organized product categories:

- “Fresh fullz (fraudster lingo for “full information”), US only, 2025–2026”

- “High-limit BINs”

- “Verified employer + income”

- “Vehicle registration data”

- “Adult site password dumps”

Every item has age, source, and validity score.

G. Ransomware-as-a-Service

Turnkey operations:

- Payload builder

- Negotiation scripts

- Hosting

- Payment infrastructure

- Revenue share with the platform (typically 20–30%)

What This Actually Means: Fraud Is No Longer Human

When you step back from the catalog of available tools, one truth becomes impossible to ignore: fraud is no longer defined by human capability. It is defined by the capabilities of the systems that now produce and distribute it.

Every component of the fraud economy – identity creation, verification bypass, account takeover, social engineering, automation – has been modularized, optimized, and packaged for scale. The human actor is no longer the limiting factor. The marketplace provides the expertise, the automation provides the execution, and the criminal business model provides the incentive structure.

The result is a threat landscape that looks less like episodic misconduct and more like a supply chain. Fraud behaves like a coordinated operation, not a series of individual attempts. It adapts quickly, repeats consistently, and expands effortlessly – because the work is performed by tools, not people.

This is why traditional controls struggle. Identity verification was built on the assumption that inconsistencies, friction, and human error would reveal risk. But the industrialization of fraud produces identities that are consistent, documents that are polished, and behavioral patterns that are machine-stable. What used to feel like a red flag – a clean file, a frictionless onboarding journey – is now a symptom of a system-generated identity.

The deeper consequence is strategic: the attacker no longer “thinks” like a human adversary. They probe controls the way software tests an API. They run parallel attempts the way a product team runs A/B tests. They scale operations the way cloud infrastructure scales workloads. And because their tooling is continuously updated, their learning curve is steep – while defenses remain constrained by review cycles, risk committees, and static models.

Conclusion: Digital Identity Must Now Be Proven Through Context

For financial institutions, the rise of Fraud-as-a-Service has exposed the limits of a decades-old assumption: that identity can be validated by inspecting individual attributes. In an industrialized fraud economy, every discrete signal – documents, device profiles, PII, behavioral cues – can be purchased, replicated, or simulated on demand. A synthetic identity can now satisfy every checkbox a traditional onboarding flow requires.

What it cannot reliably produce is contextual coherence.

Real customers exhibit history, relationships, communication patterns, platform interactions, and digital residue that accumulate organically. Their identities make sense across time, across channels, and across environments. Their behavior reflects inconsistency, natural drift, and the kinds of imperfections that automated systems struggle to fabricate.

Synthetic identities, even sophisticated ones, tend to be:

- too uniform,

- too compressed in time,

- too symmetrical,

- too detached from broader signals in the digital ecosystem.

This is the gap FIs must now address. Identity is no longer something you confirm once. It is something you understand – continuously – by examining whether its story holds together.

The operational shift is simple to articulate, harder to execute:

Verification must move from checking attributes to validating coherence.

Does the identity align with long-term behavioral patterns?

Does the footprint exist beyond the onboarding moment?

Does it behave like a human navigating life, or a system navigating workflows?

Does it fit the context in which it appears?

Fraud has become industrial. Identity fabrication has become automated. What separates real from synthetic is no longer the presence of data, but whether that data forms a believable whole.

Financial institutions that recalibrate their controls toward coherence – contextual, cross-signal intelligence – will be positioned to detect what Fraud-as-a-Service still struggles to imitate: the complexity of genuine human identity.

At Heka Global, our platform delivers real-time, explainable intelligence from thousands of global data sources to help fraud teams spot non-human patterns, identity inconsistencies, and early lifecycle divergence long before losses occur.

In an AI-versus-AI world, timing is everything. The earlier your system understands an identity, the sooner you can stop the threat.

Heka Raises $14M to bring Real-Time Identity Intelligence to Financial Institutions

FOR IMMEDIATE RELEASE

Heka Raises $14M to bring Real-Time Identity Intelligence to Financial Institutions

Windare Ventures, Barclays and other institutional investors back Heka’s AI engine as financial institutions seek stronger defenses against synthetic fraud and identity manipulation.

New York, 15 July 2025

Consumer fraud is at an all-time high. Last year, losses hit $12.5 billion – a 38% jump year-over-year. The rise is fueled by burner behavior, synthetic profiles, and AI-generated content. But the tools meant to stop it – from credit bureau data to velocity models – miss what’s happening online. Heka was built to close that gap.

Inspired by the tradecraft of the intelligence community, Heka analyzes how a person actually behaves and appears across the open web. Its proprietary AI engine assembles digital profiles that surface alias use, reputational exposure, and behavioral anomalies. This helps financial institutions detect synthetic activity, connect with real customers, and act faster with confidence.

At the core of Heka’s web intelligence engine is an analyst-grade AI agent. Unlike legacy tools that rely on static files, scores, or blacklists, Heka’s AI processes large volumes of web data to produce structured outputs like fraud indicators, updated contact details, and contextual risk signals. In one recent deployment with a global payment processor, Heka’s AI engine caught 65% of account takeover losses without disrupting healthy user activity.

Heka is already generating millions in revenue through partnerships with banks, payment processors, and pension funds. Clients use Heka’s intelligence to support critical decisions from fraud mitigation to account management and recovery. The $14 million Series A round, led by Windare Ventures with participation by Barclays, Cornèr Banca, and other institutional investors, will accelerate Heka’s U.S. expansion and deepen its footprint across the UK and Europe.

“Heka’s offering stood out for its ability to address a critical need in financial services – helping institutions make faster, smarter decisions using trustworthy external data. We’re proud to support their continued growth as they scale in the U.S.” said Kester Keating, Head of US Principal Investments at Barclays.

Ori Ashkenazi, Managing Partner at Windare Ventures, added: “Identity isn’t a fixed file anymore. It’s a stream of behavior. Heka does what most AI can’t: it actually works in the wild, delivering signals banks can use seamlessly in workflows.”

Heka was founded by Rafael Berber, former Global Head of Equity Trading at Merrill Lynch; Ishay Horowitz, a senior officer in the Israeli intelligence community; and Idan Bar-Dov, a fintech and high-tech lawyer. The broader team includes intel analysts, data scientists, and domain experts in fraud, credit, and compliance.

“The credit bureaus were built for another era. Today, both consumers and risk live online. Heka’s mission is to be the default source of truth for this new digital reality – always-on, accurate, and explainable.” said Idan Bar-Dov, the Co-founder and CEO of Heka.

About Heka

Heka delivers web intelligence to financial services. Its AI engine is used by banks, payment processors, and pension funds to fill critical blind spots in fraud mitigation, credit-decision, and account recovery. The company was founded in 2021 and is headquartered in New York and Tel Aviv.

Press contact

Joy Phua Katsovich, VP Marketing | joy@hekaglobal.com

Rethinking the Fraud Stack: A Framework for Stronger Signals and Better Decisions

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

The ‘Klarna Glitch’ Wasn’t a Glitch: The Fraud Playbook That Bypassed Traditional KYC

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript